Now Reading: Why Defense Tech Companies Are Fleeing Claude After the Pentagon’s Anthropic Blacklist

-

01

Why Defense Tech Companies Are Fleeing Claude After the Pentagon’s Anthropic Blacklist

Why Defense Tech Companies Are Fleeing Claude After the Pentagon’s Anthropic Blacklist

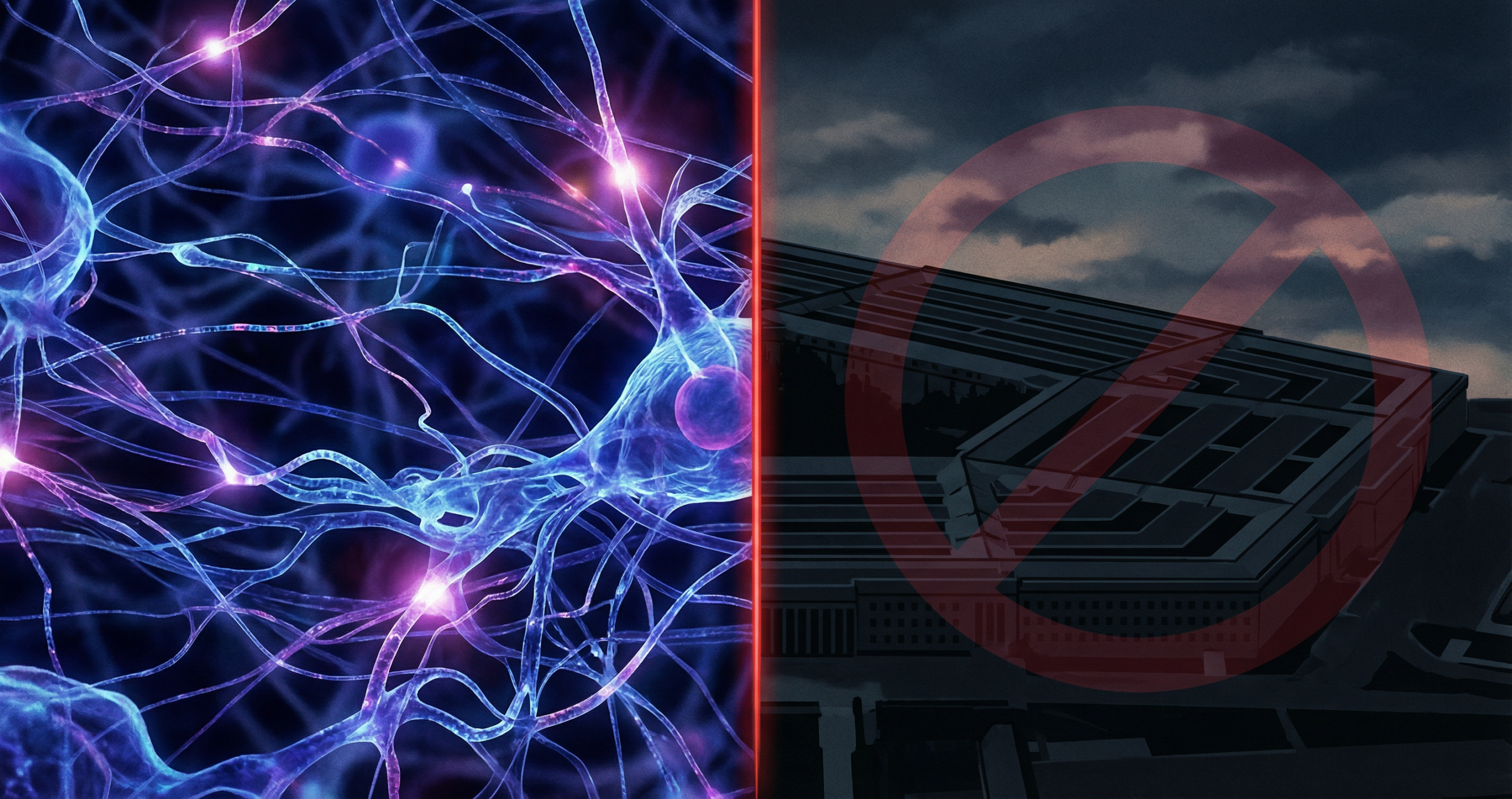

Anthropic built one of the most capable and trusted AI models in the world. That reputation is now at the center of a political and contractual crisis that is reshaping how the US defense industry thinks about AI vendor risk.

Thank you for reading this post, don't forget to subscribe!Defense Secretary Pete Hegseth formally designated Anthropic a “supply chain risk,” and the ripple effects were immediate. Companies that do business with the US military began reviewing their Claude contracts, and many are now pivoting to competing models. Meanwhile, OpenAI’s ChatGPT reportedly saw a surge in uninstalls after its own DoD deal was announced, adding another layer of complexity to AI’s complicated relationship with the military.

What Happened: The Pentagon Designates Anthropic a Supply Chain Risk

The designation came without extensive public explanation, which is standard practice in defense procurement decisions. What it means in practice is significant: companies holding military contracts that include Anthropic’s Claude as part of their technology stack now face pressure to find alternatives. Using a product from a designated supply chain risk creates compliance, security clearance, and contract renewal complications that most defense-tech firms are not willing to absorb.

The timing is notable. Anthropic has been pursuing enterprise clients aggressively, and its safety-focused positioning had made it a preferred choice for organizations that wanted capable AI with a governance-first reputation. That positioning did not protect it from the Pentagon’s designation.

What “Supply Chain Risk” Means in Defense Contracting

In the defense sector, a supply chain risk designation is not a criminal finding or a security breach disclosure. It is a risk management classification that signals to procurement officials and contractors that a particular vendor requires heightened scrutiny or should be avoided in defense applications.

The practical result is that contractors must either eliminate the flagged vendor from their supply chain, seek a formal waiver (rare and time-consuming), or risk non-compliance findings during audits. For companies whose entire revenue model depends on defense contracts, the choice is effectively made for them.

The CEO Dispute: Dario Amodei vs OpenAI

The situation became more complicated when Anthropic CEO Dario Amodei publicly called OpenAI’s messaging around its own military deal “straight up lies.” The dispute between the two leading AI labs escalated into a public disagreement about who is saying what to the military and whether AI companies are being honest about the nature of their partnerships with the Department of Defense.

Amodei’s statement was unusually direct for a tech CEO, especially one navigating a politically sensitive environment. It signals that the competition between Anthropic and OpenAI has moved beyond product capabilities into a fight over narrative and institutional trust at the highest levels of government.

Context: OpenAI announced a major partnership with the DoD that expanded its military use cases significantly. Anthropic’s position on military applications has historically been more cautious, which makes the supply chain risk designation particularly ironic to the company’s leadership.

Defense-Tech Companies Abandoning Claude: The Business Reality

Multiple defense-tech firms have confirmed they are pivoting away from Claude and toward competing models, primarily OpenAI’s GPT-4o and Microsoft’s Azure-based AI solutions. The speed of these transitions is notable. In enterprise software, migrations of this kind typically take months or years. The urgency here reflects how seriously the defense sector takes compliance risk.

The concern is not primarily that Claude is dangerous or ineffective. The concern is liability and contract status. No defense contractor wants to explain to a contracting officer why they are using a vendor the DoD has flagged. The designation alone is sufficient to trigger a vendor review regardless of technical merit.

Which AI Models Are Benefiting From the Shift

- OpenAI GPT-4o and GPT-4 Turbo via Microsoft Azure Government Cloud

- Microsoft Copilot for Government (which runs on OpenAI models)

- Google Gemini for Government via Google Cloud Assured Workloads

- Palantir AI Platform (AIP), which integrates multiple foundation models

The Broader Implication: AI Companies and Government Trust

The Anthropic situation exposes a structural vulnerability that every AI company selling to government or regulated industries faces. Technical excellence is insufficient for institutional adoption. Trust, political alignment, compliance posture, and the ability to navigate procurement bureaucracy matter as much as the quality of the model itself.

Anthropic’s predicament is a case study in how quickly institutional relationships can become contested territory when AI intersects with national security. The company built its brand on safety and responsibility. That brand is now being tested not by a safety incident but by a political classification that the company had little power to influence.

What Comes Next for Anthropic

Anthropic is not going anywhere. Its commercial enterprise business outside the defense sector remains robust, and the company’s research reputation is undamaged. The question is whether this episode changes how the company approaches government markets in the future.

For the broader AI industry, the lesson is clear: selling AI to the government is a different business than selling AI to enterprises. The rules, the risks, and the relationships are governed by systems that move slowly and unpredictably, and technical merit alone does not guarantee a seat at the table.

Bottom Line: The Anthropic Pentagon blacklist is a business setback, not a death blow. But it is a reminder that in the defense AI market, political and procurement dynamics matter as much as technology quality.

Related: Why Top Talent Is Leaving OpenAI and xAI | Nvidia Pulls Back From OpenAI | AI Culture Wars Explained

Read about Anthropic’s Claude capabilities